|

There are around 20-30 of those, but they’re not used by the small kernels that are being called a lot.) I have about 30 textures, which would account for about 15uS, which doesn’t take me anywhere near the 150uS I’m seeing. The numbers I’ve heard are on the order of 5uS plus about 0.5uS per texture/surface. /article-new/2020/02/shazamshortcut.jpg)

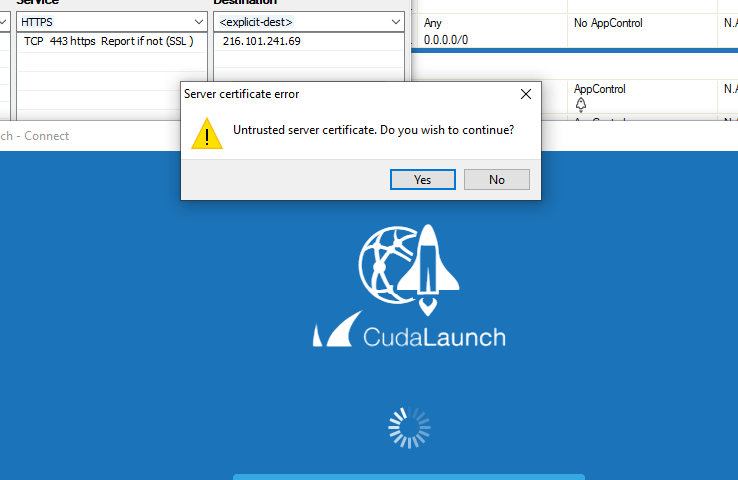

Launching kernels is relatively expensive, but it sounds like this is an order of magnitude slower than it should be. This takes the smaller kernel launches and makes them much slower than they should be. GPU can be slower because of clocking reasons.I’m profiling a slow application, and I’m seeing that every kernel launch’s cudaLaunch call is taking around 150-200uS. GPU is often a waste of resources for these tasks and it is better to offload them to the VIC. Is it possible to collapse operations into a single kernel? The application is currently GPU bound.Some conclusions that can be retrieved from the timeline presented above: Synchronisation: is there a symptom of over-synchronisation?.Overlapping: is the communication being overlapped by the computation?.Streams: are the kernels being executed in parallel?.API calls: synchronisation calls, memory copies, and their durations.GPU general occupation: is it mostly busy executing kernels?.Please, refer to section of the documentation for more information.įrom the timeline, the following details should at least be analyzed:

It is possible to model the behavior by using MPI. Let's say that the customer needs to execute the pipeline five times. Load the output file in Timeline datafileĪ similar process is followed when using multi-process. Nvvp -vm /usr/lib/jvm/java-8-openjdk-amd64/jre/bin/javaĤ. By using either of those tools, it ends up with a plot similar to the following: For that purpose, you can use the Visual Profiler or Nsight. The information presented by nvprof is perhaps not enough for your purposes and you may need a higher level of detail. You will find more details in the following sections. If most of the time is consumed by synchronisation, you should follow some memory optimisation/I/O in order to get rid of the synchronisation barriers. If the kernel execution is less than 80% and the communication takes the rest of the time, you should follow the I/O optimisation path. If your kernel is taking too much time, the track you should follow is trying to lower the kernel execution time. It will basically give you the first hint about what is taking too much time to complete. Note: For peak performance, please refer to the matrixMulCUBLAS example. Performance= 35.35 GFlop/s, Time= 3.708 msec, Size= 131072000 Ops, WorkgroupSize= 1024 threads/blockĬhecking computed result for correctness: OK

GPU Device 0: "GeForce GT 640M LE" with compute capability 3.0 =27694= NVPROF is profiling process 27694, command: matrixMul It will, by default, throw information about the API calls and how much the kernels consume. You can learn how to use Nsight in the proper way and update this wiki later :D Provided that most of RidgeRun's work is on Tegra, we can focus on nvprof and, then, using Nsight. You can find more information in this User manual for NVIDIA profiling tools for optimizing the performance of CUDA applications. Actually, Nsight is very recommended by NVIDIA for performing profiling. Depending on your setup, Nsight may be so useful since it integrates a user interface and guides the developer through the analysis process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed